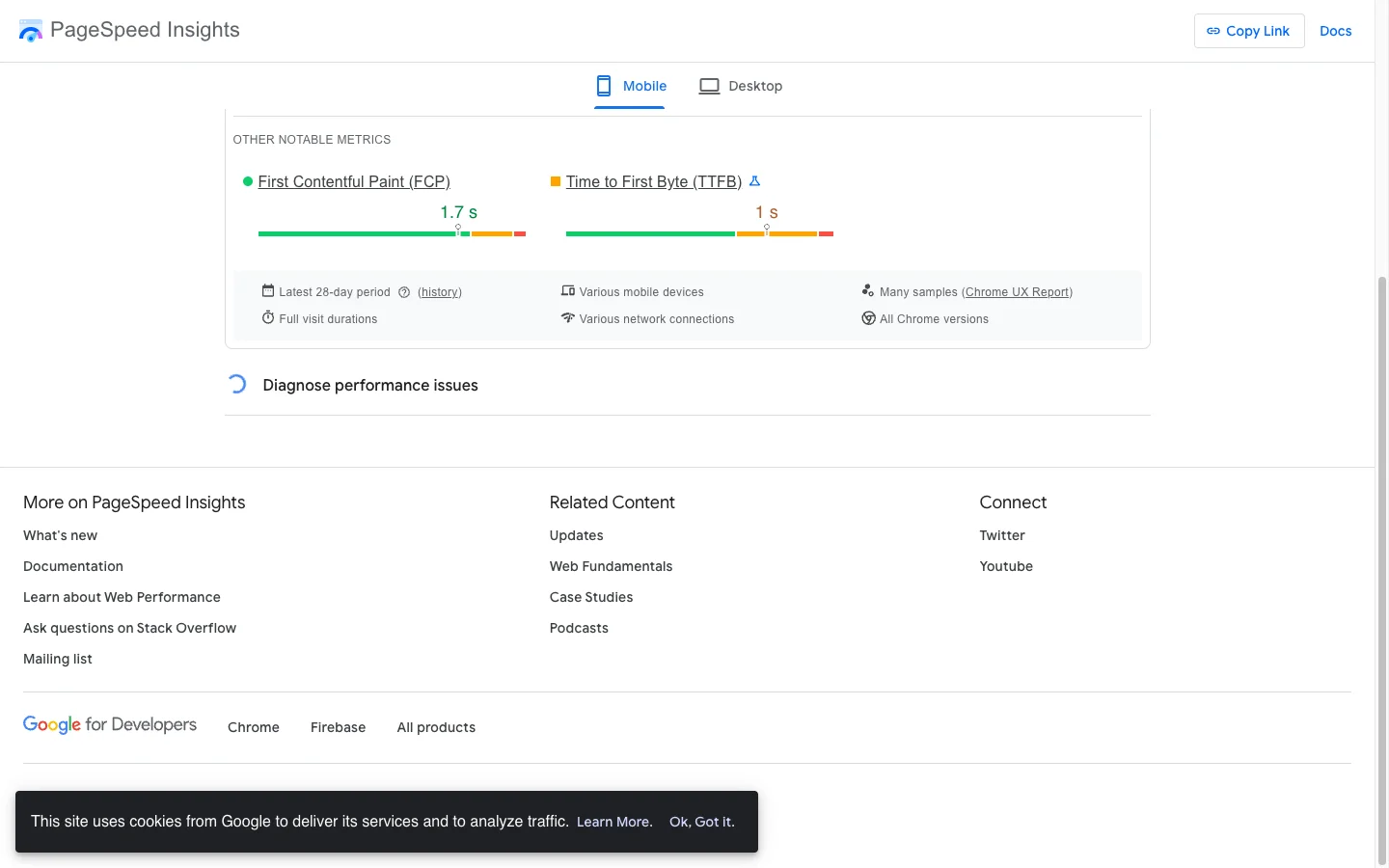

The PageSpeed Insights screenshot below is real. Same Shopify Plus product page, same Chrome session. Mobile Lighthouse score: 23 out of 100. Real-user CrUX: all five Core Web Vitals passing green. LCP 2.1s. INP 129ms. CLS 0.05. FCP 1.6s. TTFB 0.7s.

This was the Enea Studio luxury jewelry store I worked on for a Core Web Vitals sprint. The full client message after we shipped the fixes is on the Enea Studio case study, but the headline number is what most Shopify operators stare at and lose sleep over: a Lighthouse mobile score in the low 20s next to a green CrUX dashboard.

If your store is in the same situation, you are not broken. The two numbers measure fundamentally different things, and Google only ranks on one of them. This post is the technical explanation of the gap, what is actually happening on Shopify specifically, and the eight-step playbook to close it where it matters.

TL;DR: Lighthouse is a lab tool. CrUX is the field dataset Google ranks on. Lighthouse simulates a single cold load on a throttled mobile device (1.6 Mbps, 4x CPU slowdown). CrUX aggregates 28 days of real Chrome users at the 75th percentile. The two diverge on Shopify because third-party app scripts blow up the simulated lab number while real users get warm caches, BFCache, and faster networks. For ranking, only CrUX matters. For debugging regressions, only Lighthouse matters.

Lighthouse vs CrUX at a glance

| Dimension | Lighthouse | CrUX |

|---|---|---|

| Data type | Lab (synthetic) | Field (real users) |

| Sample | One simulated cold load | 28 days of real Chrome users |

| Aggregation | Single run | 75th percentile (p75) |

| Network | Throttled to 1.6 Mbps / 750 Kbps / 150ms RTT | Whatever real users have (4G, 5G, wifi) |

| CPU | 4x slowdown multiplier | Real device CPUs |

| Affects Google ranking? | No | Yes (Core Web Vitals signal) |

| Metrics reported | FCP, LCP, TBT, CLS, Speed Index, score 0-100 | LCP, INP, CLS, FCP, TTFB at p75 |

| Update latency | Instant | ~28 days for full rollover |

| Best use | Diagnose regressions, CI alarms | Confirm real-user impact, ranking health |

What is Lighthouse?

Lighthouse is a synthetic lab tool from Google that runs a single deterministic audit on a throttled emulated mobile device and emits a 0 to 100 performance score. It does not feed into Google Search rankings in any way.

When you click the Analyze button on PageSpeed Insights, or run lighthouse https://your-store.com in your terminal, you are running Lighthouse. It loads the page once, in a clean profile, on a controlled environment, and reports what it saw.

The default mobile configuration that PSI uses (documented in the Lighthouse throttling docs) is intentionally pessimistic:

- Network throttling: 1.6 Mbps download, 750 Kbps upload, 150 ms round-trip time, no packet loss. This is “the bottom 25% of 4G connections and the top 25% of 3G connections” per Google’s own description.

- CPU throttling: a constant 4x slowdown multiplier. A typical desktop CPU run gets pushed into mid-tier mobile bracket performance.

- Throttling method: simulated. PSI loads the page on the host machine’s actual connection, then mathematically projects how the page would have loaded under throttled conditions via the Lantern simulator.

- Single cold load. No browser cache, no service worker activity, no BFCache. One sample.

The Lighthouse 10 through 12 performance score weights are documented at Chrome for Developers:

- TBT (Total Blocking Time): 30%

- LCP (Largest Contentful Paint): 25%

- CLS (Cumulative Layout Shift): 25%

- FCP (First Contentful Paint): 10%

- Speed Index: 10%

A 23 out of 100 mobile score on a Shopify PDP is almost mathematically forced when third-party app JavaScript inflates TBT and LCP under simulated 4x CPU plus 1.6 Mbps. TBT alone carries 30% of the score. Combined with LCP at 25%, more than half the score is hostage to render-blocking and parser-blocking app scripts running on a single emulated CPU core.

What is CrUX (Chrome User Experience Report)?

CrUX is Google’s public dataset of real-user performance metrics, collected from opted-in Chrome users and aggregated at the 75th percentile over a 28-day rolling window. It is the only dataset Google uses for the Core Web Vitals page experience ranking signal.

CrUX (overview, methodology) is real telemetry, not a simulation. Every time a Chrome user with the right opt-in flags loads your URL, their browser reports the metrics back to Google.

A user is eligible to contribute to CrUX only if all four conditions are met:

- Usage statistic reporting is enabled

- Browser history sync is turned on

- No Sync passphrase is set

- They are running Chrome on desktop (Windows, macOS, ChromeOS, Linux) or Android

This is critical and often missed: CrUX excludes iOS Chrome entirely because Chrome on iOS uses WebKit, not Blink. It also excludes Android WebView, Edge, Brave, and any non-Chromium fork. The dataset is a Chromium-only slice, so a store with heavy iOS traffic is reporting on roughly half its real audience.

The aggregation window is 28 days, rolling, updated daily around 04:00 UTC (CrUX API docs). The reported number is the 75th percentile, meaning at least 75% of user experiences must hit the threshold to be classified as good. Origin-level data is always available if there is enough traffic. URL-level data requires more samples (community estimates put eligibility around 1,000 monthly visits per device class).

CrUX feeds three places you actually look at:

- The field-data tab on pagespeed.web.dev

- Google Search Console under Page Experience and Core Web Vitals

- Shopify admin under Online Store > Performance (Shopify pulls CrUX directly)

Why does Google use CrUX, not Lighthouse, for ranking?

Google uses CrUX because it represents what real users actually experience. A synthetic lab score is a hypothesis. Real-user data is fact. The page experience ranking signal would be useless if it could be gamed by tuning a single emulated run.

Google has been explicit about this for years. Vercel’s writeup on Core Web Vitals and SEO summarizes it: “Google does not consider your Lighthouse score in any way for search ranking.” DebugBear’s ranking factor doc confirms it: “Only the field data is used as a ranking signal.”

This matters for two reasons:

- A 100/100 Lighthouse score does not save you. If your real users hit a 4-second LCP because of regional CDN issues, Lighthouse cannot see that, and CrUX will. Your ranking will reflect the field data.

- A 23/100 Lighthouse score does not sink you. If your real users hit a 2.1-second LCP because of warm caches and BFCache, the page experience signal is positive regardless of what the lab number says.

Shopify itself published a piece titled “Common Misconceptions About Site Speed Score” that walks through this distinction from the platform’s perspective. They retired the Lighthouse-derived speed score from the admin and replaced it with a field-data-driven Web Performance dashboard, which is the strongest possible signal that even Shopify stopped trusting the lab number.

Why does Lighthouse fluctuate 15+ points between identical runs?

Lighthouse is a single sample of a wide statistical distribution. Google’s official variability documentation lists seven sources of noise. The median of five Lighthouse runs is twice as stable as a single run, which is to say a single click of the Analyze button is essentially a random draw.

The official Lighthouse variability doc names seven sources of noise:

- Page nondeterminism: A/B tests, ad rotation, dynamic content variations

- Local network variability: packet loss, last-mile congestion

- Tier-1 network variability: cross-geo hops, peering issues

- Web server variability: cold or warm origin state, shared hosting load

- Client hardware variability: laptop battery state, thermal throttling

- Client resource contention: other apps, antivirus, browser extensions, garbage collection pauses

- Browser nondeterminism: V8 JIT timing, render scheduler decisions

Field reports of 5 to 10 point swings between identical Lighthouse runs are common across the SpeedCurve, DebugBear, and BrowserStack ecosystems. A score that fluctuates this much is unreliable as a single number. Treat it as a distribution, never as a fixed measurement.

The practical rule: if you must use Lighthouse for a before/after comparison, run it 5 to 9 times in each state and compare the median. Anything else is noise dressed up as a measurement.

Why is the gap so big on Shopify specifically?

Shopify stores carry an average of 6 to 9 installed apps and 120+ scripts per page. Third-party JavaScript from review widgets, popups, chat, and analytics is the single biggest contributor to the Lighthouse-vs-CrUX divergence on Shopify, because these scripts execute on the simulated single CPU core under 4x throttling but get warm-cached, BFCached, or skipped entirely for real returning users.

The most authoritative public dataset on Shopify performance is the Hyperspeed audit of 1,166 Shopify stores. The findings are sobering:

- Average mobile PageSpeed score: 30 out of 100

- Average LCP: 11.9 seconds

- Average TBT: 8.8 seconds

- Average page weight: 11.0 MB

- Average scripts per store: 120+

- Total unique third-party scripts across the dataset: 39,503

- 45% of all HTTP requests go to third-party origins

- 97% of stores fail to preload the LCP image

- 72% still serve legacy JPEG/PNG instead of WebP/AVIF

The per-app cost is what makes the lab number explode. A few benchmarks I have collected and verified across audits:

- Klaviyo onsite: ~150ms+ per pageview, with the main loader spawning roughly 23 child scripts contributing ~1.2 seconds of combined execution

- Yotpo: 250 to 450ms added per page, four scripts ~240 KB

- Judge.me: ~30 to 60 KB total, near-zero Lighthouse drop (clean implementation)

- Rebuy Engine: ~172ms blocking time

- Microsoft Clarity: ~95ms, present on 42% of stores

- Gorgias chat: ~2,200ms total weight where present

- Stack of GA4 + Facebook Pixel + GTM + Clarity: ~168ms blocking from analytics alone, before any product or upsell logic

These scripts hammer Lighthouse disproportionately because:

- They are typically render-blocking or parser-blocking until proven otherwise. Shopify’s own ParserBlockingJavaScript theme check exists precisely because the platform recognizes this pattern.

- They run on a single simulated CPU core under 4x throttling. A 30ms task on the host becomes 120ms in the projection, then competes with every other app’s 120ms task.

- They block FCP, push out LCP, and inflate TBT. Three of the five Lighthouse score weights, totaling 65%.

- App Embeds inject globally by default. Unless the merchant explicitly scopes a review widget to product templates only, it loads on cart, account, and even checkout-related pages.

Most of this damage is invisible in CrUX because real users get warm DNS, persistent HTTP/2 connections, cached chunks, and BFCache hits on back navigation. The cold-load pessimism that punishes Lighthouse simply does not apply to a returning shopper on wifi.

For the deeper Shopify performance playbook, my Core Web Vitals optimization guide walks through the specific Liquid patterns, and the Liquid loop optimization post covers theme-level fixes. Popup-specific damage is documented in popups killing conversions, which traces a single popup script eating 56% of all mobile taps on a real client store.

What is actually happening: the technical mechanism

The lab pessimism plus the field optimism produce a divergence that is not a bug. It is the predictable difference between a single cold synthetic load and 28 days of real returning customers on real networks. BFCache alone explains a large share of the gap.

In CrUX, most users get a meaningfully easier path:

- Warm DNS, persistent HTTP/2 or H/3 connections with keep-alive

- Cached fonts, JS chunks, theme CSS from prior pages on the site

- BFCache hits on back-and-forward navigation, which Google explicitly documents as “near instantaneous, included in your field data” (web.dev: Why lab and field data can be different)

- Service worker hits where present (less common on Shopify, but app-injected workers do exist)

- Real network mix: 4G, 5G, wifi, often 50 to 300 Mbps actual, not throttled to 1.6 Mbps

- Real device mix biased toward Android (CrUX excludes iOS), which weights toward the Android low-end on devices but typical actual networks

In Lighthouse:

- Cold load every single time. No cache primer.

- 1.6 Mbps simulated link plus 4x CPU throttling on the projection.

- Single sample, deterministic but high variance.

- One LCP element captured, one Speed Index frame trace.

The Enea numbers map cleanly onto this. Real users mostly arrived on wifi with warm caches, hit BFCache on back navigation from collection to PDP, and resolved LCP at 2.1 seconds at the p75 mark. The Lighthouse cold load with the same hero image plus the Klaviyo onsite plus the review widget got projected at roughly 7.9 seconds LCP, which alone scores 0 out of 100 on the LCP curve. Combined with TBT inflation from third-party JavaScript, the overall score lands at 23.

This is the canonical lab-versus-field split documented at web.dev: lab and field data differences and web.dev: CrUX and RUM differences. It is not a Shopify-specific phenomenon, but Shopify magnifies it because of the third-party app density.

When to trust which

Use CrUX for ranking, real-user impact, and executive reporting. Use Lighthouse for diagnosis, regression detection in CI, and hypothesis generation. Never make a decision on a single Lighthouse run, and never claim a fix worked until CrUX confirms it 28 days later.

| Tool | What it answers | When to use |

|---|---|---|

| CrUX (PSI field tab, Search Console, BigQuery, Shopify admin Performance) | Are real users having a good experience? Does this URL pass the page experience signal? | Production health, ranking diagnosis, executive reporting, post-deploy verification |

| Lighthouse (PSI lab tab, CLI, CI) | Where could a regression be hiding? What does cold-load behavior look like? Are render-blocking scripts creeping in? | Hypothesis generation, CI alarms before merge, before-and-after diffs on identical environments |

A useful caveat on INP measurement: Lighthouse historically used Total Blocking Time as a lab proxy for INP because the synthetic run does not actually interact with the page. Web.dev’s TBT documentation states this directly: “TBT may be a reasonable proxy metric for INP for the lab but it’s not a substitute for INP in and of itself.” Real INP can only be observed in CrUX. Any blog post claiming Lighthouse measures INP directly is wrong.

For a CI-friendly synthetic option with much lower variance than PSI, DebugBear, Calibre, and SpeedCurve all run Lighthouse on dedicated controlled hardware and average results across many runs. These are the right tools if you genuinely need a stable lab number to gate deploys.

How to close the gap on Shopify

The fix list is in the front matter as a HowTo schema (eight steps), and the same eight steps render in the FAQ schema below. Quick summary of the eight:

- Audit installed apps and remove unused. Working set of 6 to 8 apps maximum.

- Scope app embeds per template. Disable review widgets and chat on cart, account, checkout-related pages.

- Defer all non-critical third-party scripts. Klaviyo, GTM, Clarity, chat all need async or defer.

- Self-host critical fonts. Move above-the-fold font face from Google Fonts CDN to theme assets, font-display: swap.

- Preload the LCP image with srcset. 97% of audited Shopify stores skip this.

- Audit popup and email-capture boot cost. Defer Klaviyo or Privy popups until first interaction.

- Lazy-load below-fold images. Cuts mobile LCP by 0.5 to 1.5 seconds on image-heavy PDPs.

- Verify in CrUX after 28 days. Lighthouse improves immediately and noisily. CrUX is the only confirmation that matters.

Step zero, before any of these, is to actually look at your CrUX data. If you are already passing in the field, your Shopify Lighthouse score is a vanity metric and your time is better spent on conversion work than on chasing a number Google does not use. If you are failing in the field, the eight-step list will move both numbers in the right direction.

If you want the broader CRO context for which fixes drive revenue (not just Web Vitals), my Shopify CRO audit guide and the 40+ point CRO audit checklist cover the full funnel framework. The mobile CRO guide for 2026 covers the mobile-specific optimizations that compound with CWV work.

What about the other case studies?

Lighthouse versus CrUX divergence shows up in different shapes across my recent client work, and the contrast is useful:

- Enea Studio: Lighthouse 23 mobile, CrUX all five metrics passing green. The classic “lab pessimism, field is fine” pattern. Theme work was solid; the app stack was creating the lab gap.

- Factory Direct Blinds: Mobile PageSpeed 38 to 81 (a 113% lift), mobile LCP 22.0s to 2.7s, desktop 48 to 99. Both lab and field moved together. This is what a real performance problem fixed correctly looks like.

- WD Electronics: Mobile Lighthouse 41/100, LCP 9.3s, CLS 0.9. Severe lab failure that genuinely reflected real-user pain. The lab was not lying here, it was flagging actual issues.

- Collegiate Apparel Brand: Lighthouse 33/100 with CLS already at 0. Best Practices 100/100, SEO 92/100. The score was punishing one specific bottleneck (LCP at 7.9s), not a broad failure.

- Teeth Whitening DTC: Mobile Lighthouse 64/100, LCP 3.0s, TBT 1,250ms (6x the recommended threshold). Mid-band score where the gap is genuinely diagnostic.

The pattern: Lighthouse is useful when it is in the 30 to 60 range and CrUX shows correlated pain. It is misleading when it is in the 20s with green CrUX (Enea) or the 90s with red CrUX (rare on Shopify, common elsewhere). The lab and field datasets are two different signals and you read both, not one.

Bottom line. For Google ranking, only CrUX matters. For debugging regressions, only Lighthouse matters. A 23 Lighthouse score next to green CrUX on a Shopify store is not a problem. It is the predictable shape of the third-party app reality. Optimize for real users, verify in CrUX, and stop staring at the lab number.

If your Shopify store has a Lighthouse score that does not match what Search Console is showing for Core Web Vitals, and you want a structured diagnosis that separates the lab pessimism from real revenue-impacting performance bugs, book a free 30-minute strategy call. I will run PSI on a representative URL with you live and tell you whether the gap is fine to ignore or hiding a real fix.